Large Sample Library: User Guide

The Large Sample Library lets you run a Monte Carlo simulation for a large sample size and/or large model that would otherwise exhaust computer memory. It runs the model with a series of small batch samples and aggregates the results of the batches into a large sample for analysis of the resulting distributions. This Guide describes how to use this library.

Introducing the Large Sample Library

This library makes it easy to break up a large sample into a series of batch samples, each small enough to run in memory. It accumulates these batches into a large sample for selected variables, known as the Large Sample Variables' or LSVs. You can then view the probability distributions for each LSV using the standard methods — confidence bands, PDF, CDF, etc. — with the full precision of the large sample. It saves memory by not storing large sample results for intermediate variables that are not LSVs.

If you are running Analytica 64-bit, it can use virtual memory (VM) when it runs out of random access memory (RAM), and so significantly expand the amount of memory available, up to around 100GB in some cases. But, use of VM tends to slow down calculations considerably. So even if you can run your model with the sample size you want using VM, you may well find using the Large Sample library substantially speeds up the computation by avoiding need for VM.

You can download the (Large Sample Library v10.ana) from here.

How to load the library

First, load the library into the model in the usual way:

- Download Large Sample Library v10.ana.

- Open your model in Analytica.

- Switch to Edit mode.

- Select Add library… or Add module… from the File menu, and browse to find the Large Sample Library v10.ana file.

- In the Add a module or library dialog, select embed a copy and click OK.

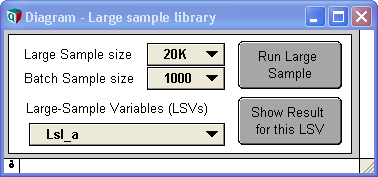

Once it is loaded, open the Large Sample Library module. It looks like this:

Select the LSVs to compute with large samples

The library computes and saves large samples only for selected variables, termed the Large Sample Variables or LSVs.

After loading the library, the first thing to do is to click the button “Set up Large Sampling”. It will then treat all uncertain variables in the model that have Output nodes as LSVs. Uncertain means that they are probabilistic — i.e. they are chance variables or depend on chance variables (or, more precisely, on variables that are defined with a distribution function). For more on Output nodes, see User output nodes in the Analytica User Guide.

Usually, this choice of LSVs is what you want, and you can proceed straight to Select Large sample size and Batch Sample size below. If your model has no LSVs (most likely because it has no Output nodes), or it has too many, continue with this Section.

In order to determine whether output nodes are probabilistic, it evaluates them in sample mode with a sample size of 1. In some large models, even this may take a long time. If your model flags any evaluation errors, you may need to correct those.

To add LSVs

To include another uncertain variable as a LSV, simply create an Output node for it. To do this, select the variable node, and select Make Output node from the Object menu. Drag the new Output node to wherever you would like it.

To select fewer LSVs

If you have too many LSVs or if some are large arrays with large or many indexes, your computer may not have enough memory to save Large sample results for all of them. In that case, you may specify as LSVs only for those few variables for which it is important to view their probability distributions using a large sample.

Instead of treating all uncertain variables with Output nodes in the entire model as LSVs, you can select as LSVs only those variables with Output nodes in a specific module (and its submodules). Simply select the module you want from “Find LSVs in this module” and click “Set up Large Sampling”. It will list the new LSVs in the pulldown menu “Large-Sample Variables (LSVs)”. If there are no LSVs in the selected module, it will give a warning.

What if you want to select as LSVs a set of variables that are not all contained in the same existing module? Simply create a new module and add Output nodes into it for the variables you want as LSVs. Then click “Set up Large Sampling” to find the module. Select the new module in “Find LSVs in this module” and click “Set up Large Sampling” again. The new LSVs will now appear in the menu below “Large-Sample Variables (LSVs)”.

To avoid evaluation during Set up

To determine which output variables are probabilistic, the large sample library evaluates each output node using a sample size of 1. Those variables whose sample is not indexed by Run can then be filtered out of the list of LSVs, and the simulation does not need to use space to cache their computed sample values.

If you are certain that all your output nodes are probabilistic, you may want to forgo this check in order to speed up the Setup process. Another thing that can happen is that some models may experience errors when run on a sample size of 1. For example, computation of Correlation is not possible, and the resulting errors or warnings may impede your ability to get the large sample library properly set up.

You can thus avoid this evaluation step by unchecking the Uncertain LSVs only checkbox before pressing Set up Large Sampling. All variables with outputs in the selected module will then be included as LSVs. It is possible that some will be non-probabilistic.

Reflecting changes to the model

If you make any changes to the model that will affect the LSVs — for example, adding or deleting Output nodes for uncertain variables — simply click “Set up Large Sampling” again to make sure the LSVs reflect these changes.

Distributing the model for end users

If you are distributing the model in a Browse-only form (saved from the Enterprise edition of Analytica), you may want to hide the lower section of controls by dragging up the bottom edge of the Diagram window for the Large Sample library, so that it looks like this:

This will avoid confusing end users by hiding controls that they don’t need to use.

Using statistical functions

There are some special requirements and limitations for variables that use statistical functions — such as, Mean, GetFract, or RankCorrel. See Viewing non-LSV variables below for details.

Dealing with evaluation errors

When you press the Set up Large Sampling button, you may encounter evaluation errors in your model that you normally don't see. These may occur because the Large Sample Library attempts to evaluate each of your output variables in probabilistic mode. You may have output variables that you only evaluate normally in Mid mode, so you don't see these errors.

To avoid these errors, you may need to set up a new module to house your desired LSVs, making sure that all the nodes in that module can be computed in probabilistic (non-Mid) mode.

Once you've made module changes (introducing new modules, etc), you may need to press Set up Large Sampling again to refresh the module pulldown. You may want to set the pulldown to "Example_large_sample" first, so that the same set of problematic variables that caused the errors aren't re-checked (causing the same errors again). Once the modules are updated, then you can select the module with outputs of interest press it a second time to find the LSVs in that module.

Select Large sample size and Batch Sample size

Large sample size controls the Monte Carlo sample size used for the Large Sample Variables. This library lets you analyze far larger samples without running out of memory — but, it does not allow an unlimited sample size: It saves the full sample size for each of the LSVs, which itself takes up memory, approximately proportional to the product of the Large sample size for each LSV and the sizes of any other dimensions it may have.

We suggest you start with a modest Large sample size, and then increase it if you want to find out how large a sample size it can handle in the memory and time you have available.

The Batch Sample size is the number of samples run in each batch. This should be smaller than the Large sample size — otherwise there’s no point in using the library!

Typically, the simulation runs a bit faster for larger batch sizes — up to the size that requires use of virtual memory. At that point, the simulation slows down considerably. To find the best Batch size for a model, start small and increase it until the simulation starts to slow down, and put it back a step.

To keep tabs on memory usage, open the Show memory usage from the Window menu.

Unfortunately, Windows sometimes freezes for seconds or minutes when it runs out of physical memory even when you have set significant virtual memory. Even when it comes back, all interactions including mouse movements in Windows and all applications including Analytica can be extremely slow. This is apparently due to a defect in Microsoft Windows that Analytica cannot easily avoid. In this mode, it may not necessarily respond to interruption by typing control-period or to clicking the Cancel button in the Simulation progress bar. So, you may be forced to terminate the Analytica process via the Windows Task Manager.

Click button “Run Large sample”

This starts the simulation with the selected Large sample size and Batch sample size. It shows a progress bar while it is running.

To view Large-Sample Results

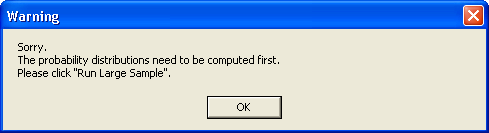

After running the large sample, you can view the results in the usual ways. For example, just click on the Output node for any LSV. Select the uncertainty view you want — confidence band, statistics, CDF, or PDF — in the usual way. Or you can select an LSV from the “Large-Sample Variables (LSVs)” pulldown menu, and click button “Show Result for this LSV” to see it. If you try to view the probability distribution of an LSV before clicking “Run Large Sample”, it will usually give a warning:

The smoothness of probability density function (PDF) views is controlled by Samples per PDF step interval, which does not change with the sample size. If you want the PDFs to appear smoother, select the Uncertainty Setup dialog (Ctrl+-U), and the Probability Density tab; then enter a larger number for Samples per PDF step interval.

You may also wish to change the Line style from stepped to linear, in the Graph setup dialog, Graph style tab.

Viewing non-LSV variables

After Run Batch Sampling finishes computation, it leaves system variable SampleSize set to the Large sample size you have specified. This lets you view the probability distribution for any LSV using the Large Sample size.

Warning: Do not try to view an uncertain result for any variable that is not defined as an LSV: If you do, Analytica will try to compute the distribution using its standard simulation method using the Large sample size and it will probably run out of memory.

If you want to set a smaller Sample size and run the simulation in the usual way, reset Sample Size in the Uncertainty Setup dialog Uncertainty sample tab. This will delete all the Large sample results for the LSVs.

Large Sample uses Simple Monte Carlo, rather than Median Latin Hypercube sampling (Analytica’s usual default). See Uncertainty Setup dialog in the Analytica User Guide for more details.

Variables using Statistical Functions

The Large Sample library has some special requirements and limitations for functions that use Statistical functions, including Frequency, GetFract, Kurtosis, Mean, Probability, ProbBands, RankCorrel, Regression, Sample, SDeviation, Skewness.

Statistical functions expect their main parameter(s) to be probabilistic (i.e., indexed by Run). So, to compute correctly using Large samples, their uncertain inputs must be defined as LSVs. (Statistical functions return a nonprobabilistic value — i.e. not indexed by Run. Hence variables defined with statistical functions may not themselves be LSVs.)

For example, consider a model that performs an Importance analysis on variable Y and has chance variables A and B. Selecting Make importance from the Object menu on variable Y will have add these two variables:

Variable Y_importance := Abs(RankCorrel(Y_inputs, Y)) Index Y_inputs := Table(Self)(A, B)

RankCorrel(x, y) is a statistical function with two probabilistic parameters. Y_importance itself cannot be a LSV since it is not probabilistic. To make sure it computes the rank correlation with the large sample, its parameters, Y and Y_inputs must be LSVs. You should therefore create Output nodes for them, as described above, if they are not already.

If you are not sure whether your model uses any statistical functions, enter the Large Sample Details module and view the result of Possible Problem Variables. This finds variables in the model that call statistical functions or otherwise operate over the Run index. When viewing the result, you can press Ctrl+Y to toggle between viewing the titles or identifiers, and you can double-click on the name to jump to the potential problem variable. The variable Lsl_npv_importance will probably be listed, as it is included in the example inside the Large Sample Library.

Limitation on mixed statistical and probabilistic values

The Large Sample cannot compute large-sample distributions for variables that combine the results of a statistical function and an uncertain quantity that is not a parameter to a statistical function. For example,

A := Normal(10, 5) Adev := A – Mean(A)

Even if A and Adev are defined as LSVs, Adev will not be computed correctly. In some cases, as here, it is possible to rewrite the formulas to avoid this problem. In this limitation, the Large Sample Library is like most other Monte Carlo and risk analysis software (and unlike Analytica used with its standard sampling scheme).

Useful Hints from Users

If you have used this library and figured out how to overcome problems encountered, please add tricks that you have learned here for future users.

Enable comment auto-refresher