Formulations that Preserve Linearity for Optimization

When creating an optimization model, there are advantages to formulating your model as a linear program (LP), avoiding non-linear relationships. Linear programs solve more robustly than non-linear programs, and usually solve faster than their non-linear counterparts even when there are mixed-integer decision variables.

Many problems that seem non-linear at the outset can be converted into a linear formulation with sufficient ingenuity, often by changing the set of decision variables used to represent the problem and its search space, and by introducing auxiliary integer and boolean variables and auxiliary constraints. In general, this transformation is not easy and requires substantial creativity and analytical skills.

This page is devoted to cataloging various cases that come up with solutions for how these can be encoded in a linear fashion.

Piecewise linear relationships

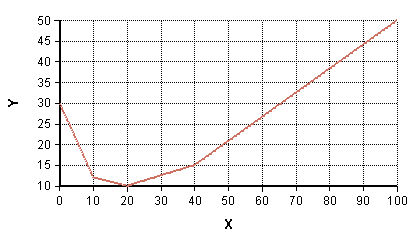

Income tax is a function of taxable income, but in the US is determined according to a piecewise-linear specification (with the slope changing at different tax brackets). In other cases, a non-linear relationship between two scalar variables can be approximated by a piecewise linear curve, as in this example:

Structured optimization in Analytica processes piecewise-linear relationships automatically for you when these appear within your model through the use of the LinearInterp function. A PWL curve is specified by a set of points, (d, r), containing the break points in the curve. In Analytica, these would be arrays «d» and «r» sharing a common index «i». The result, y is then computed as LinearInterp(d, r, x, i). To be linear, the arrays «d» and «r» cannot depend on decision variables of the optimization.

Calls to LinearInterp can appear anywhere in your model. DefineOptimization processes these automatically and adds several binary and continuous auxilliary variables to the underlying optimization problem while preserving linearity.

Semi-continuous Domains

A certain power plant can either be off, or its power output is bounded 100MW and 500MW.

Decision PowerPlantOutputDomain: Continuous(100M, 500M, orZero: true)

Static Conditional Constraints

A constraint with one or more intrinsic indexes specifies an array of scalar constraints. Conditions arise where only a subset of that array should be enforced. When the condition that defines which elements of the array should be enforced does not depend on any decision variables, then we say the conditional is static - it will remain the same through the optimization search.

Let's assume the constraint with intrinsic indexes I and J is of the form:

F(x, y)<=0only whenBis true

where F(x, y) and B are array-valued, but B does not depend on decision variables.

To accomplish this, you can force the non-active constraints to be vacuous, or you can cause their constraint part to be Null, or you can transform the constraint to include only the active components. Each will be demonstrated here.

- Make the constraint vacuous when

Bis false

- Make the constant be Null when

Bis false

- Transform to include only the active constraints.

Index K := 1..Sum(B, I, J)Index L := ['I', 'J', 'F']

MdArrayToTable(If B Then F(x, y) Else Null, K, L)[L = 'F'] <= 0

The transformation approach minimizes the number of scalar constraints in the LP formulation, so is essentially the most efficient of these.

Varying Conditional Constraints

When a constraint should be enforced only under certain conditions, and those conditions vary with the value of decision variables, this is referred to as a varying conditional constraint.

In psuedo-code (but not actual Analytica syntax), a varying conditional constraint is like:

If B(x, y) Then (F(x, y) <= 0)

where B is a boolean 0,1 condition that is linear in the decision variables (usually involving boolean decision variables). We write B and F in function call notation to emphasize the dependence on decision variables.

In a non-linear formulation, this can be easily captured using a vacuous constraint method:

F(x, y) <= If B(x, y) Then 0 else INF

This formulation, however, is not linear. A solution is to write

F(x, y) <= (1 - B(x, y))*10M

This formulation preserves linearity and works as long as it can be guaranteed that F(x, y) would never exceed 10M. (You would adjust the big number appropriately for your problem, of course). You cannot use INF as the big number since 0*INF is NaN (not 0). It is best to keep the big number as small as possible since this has an impact on the numeric stability of the solution process.

Using this technique, a varying conditional equality constraint must be broken into two inequality constraints.

If B(x, y) Then (F(x, y) = 0)

is encoded with these two linear constraints:

F(x, y) <= (1 - B(x, y))*10M(B(x, y) -1)*10M <= F(x, y)

Leave "On" for a minimum number of periods

If a power plant is turned on, it must remain on for a minimum of the following three time periods (i.e., is on for at least 4 consecutive time periods). This can be encoded by introducing an auxiliary boolean variable, PlantCommit, when is true when we are committed to at least 3 more periods of being on.

Decision PlantOnDomain: Boolean()Intrinsic Index: Time

Decision PlantCommitDomain: Boolean()Intrinsic Index:Time

Index Steps := 0..3

Constraint: Stay_on_for_3Intrinsic Index: TimeDefinition: sum(PlantOn[@Time = @Time + Steps], Steps) >= 4*PlantCommit

Constraint On_During_CommitIntrinsic Index: TimeDefinition: PlantCommit <= PlantOn

Penalty for a change from a nominal value

You want to incur a penalty if the decision variable differs from some nominal value. For example, if you were to re-balance your portfolio, you would incur a sales commission.

Index Holding

Decision AllocationDomain: Continuous(0, max_allocation)Intrinsic: Holding

Variable CurAllocationDefinition: Table(Holding)

Decision ChangeDomain: Boolean()Intrinsic: Holding

Constant Penalty_for_change := 10

Variable PenaltyDefinition: Sum(Penalty_for_change * Change, Holding)

Constraint Enforce_changeDefinition: -Max_allocation*Change <= Allocation - CurAllocation <= Max_allocation*ChangeIntrinsic: Holding

To see this idea encoded (in a car-dealer context), see the following example model:

Penalize_Change_in_LP.ana

Mixed Complementarity, #1

A simple form of mixed complementarity is the following:

- [math]\displaystyle{ x=0 \mbox{ and } 0\lt =F(x)\mbox{, or } 0\lt =x \mbox{ and } F(x)=0 }[/math]

Geometrically, on a plot where x is on the horizontal, and the constraint is the vertical axis, this mixed complementarity constraint specifies that the solution must lie on either the positive x axis or on the positive y axis.

The above is equivalent to the following non-linear formulation:

- [math]\displaystyle{ x\gt =0, F(x)\gt =0 \mbox{ and } x\cdot F(x)=0 }[/math]

This representation of complementarity cannot be captured exactly in a linear form (with F(x) being linear) using the mixed-integer mechanisms in Structured Optimization, but an approximation to it can be, where the approximation is:

- [math]\displaystyle{ 0\lt =x\lt =U, 0\lt =F(x)\lt =U\mbox{, and } x\cdot F(x)=0 }[/math]

or equivalently:

- [math]\displaystyle{ x=0 \mbox{ and } 0\lt =F(x)\lt =U\mbox{, or } 0\lt =x\lt =U \mbox{ and } F(x)=0 }[/math]

In other words, we've artificially imposed an upper bound. If the constant U is made very large, then the approximation approaches the desired formulation (but for numeric stability, it is preferable to keep U as small as reasonable).

The formulation is captured with linear constraints by introducing an auxiliary boolean variable, b:

0 <= x <= (1 - b) * U0 <= F(x) <= b * U

Mixed Complementarity, #3

Another general form of mixed complementarity is where one of the following must hold:

- [math]\displaystyle{ \begin{array}{ccc} L=x & \mbox{and} & F(x)\lt =0 \\ L\lt =x\lt =U & \mbox{and} & F(x)=0 \\ x=U & \mbox{and} & F(x)\gt =0 \end{array} }[/math]

Again, an approximation must be employed which adds an artificial constraint:

- [math]\displaystyle{ -K \lt = F(x) \lt = K }[/math]

where [math]\displaystyle{ K }[/math] is sufficiently large constant. With the addition of the artificial constraint, the MCP is encoded by introducing three boolean variables, b1, b2, and b3, and encoding as

b1 + b2 + b3 = 1L + (U - L)*b3 <= x <= U - (U - L)*b1-K + K*b2 <= F(x) <= K - K*b2

B := Max(A,I)

When you have the relationship B is defined as Max(A,I) within your model, this can often be linearized by adding B as another decision variable in the LP, and then adding a new constraint:

A <= B

and set the constraint's intrinsic index to be I.

This will work IF your objective creates an incentive for B to be as small as possible. In many models, this often is the case, since you are often minimizing a net cost, and more B would drive up costs. So if you are in this case, it is easy.

It should be clear that the same idea works for B := Min(A,I), as long as the Objective incentivizes B to be as large as possible.

When you don't have this natural incentive in the right direction, you can add in a type of varying conditional constraint. Introduce another boolean decision variable Bi, with intrinsic index I. This will be set true in the solution for the position where the Max(A,I) found the max. Then add these two constraints (the first one replaces the constraint above):

A <= B <= A + (1-Bi)*10M

and

Sum(Bi,I)=1

where the constant 10M needs to exceed the spread of values in A.

By adding B as a decision variable to your LP and adding the constraint(s), you've linearized the Max(A,I) expression for the optimizer when the LP is solved. You should leave the definition of B as Max(A,I), so that when you evaluate your model outside of optimization, you still compute the Max. This also helps to document your logic in a natural way.

Please Contribute

If you encounter cases like these that may be of general interest, then please add them here along with the method you found for expressing them in a linear fashion.

Enable comment auto-refresher